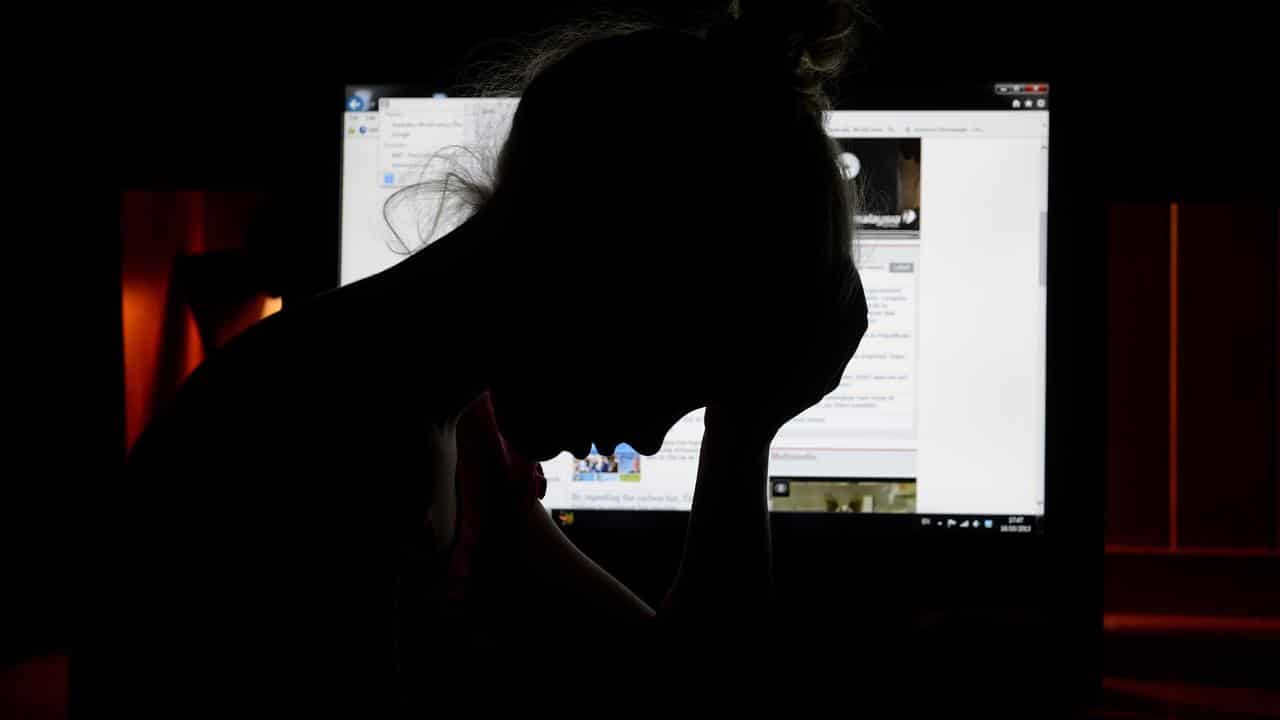

Social media platforms have been accused of not taking their responsibility seriously to shield users from hate speech and abuse online.

Changes to online safety laws are needed following a surge in anti-Semitic and Islamophobic abuse online, Communications Minister Michelle Rowland says.

"There is a gap in the regulatory framework, it's one that a number of jurisdictions are grappling with and it's one I look forward to taking measures on as part of the basic online safety expectations," she said in an address to the National Press Club on Wednesday.

"That is why we are addressing this gap in the framework by updating the expectations to include what the platforms are doing in relation to their own policies about minimising the impact of hate speech."

Ms Rowland said there was a role for governments to keep people safe online but the main responsibility rested with the platforms.

"It is a virtuous cycle for platforms to build into their own systems the ability to keep users safe," she said.

Under changes proposed by the minister, internet platforms would have to consider the best interests of children when designing their services.

Platforms would have to crack down on unlawful material created through artificial intelligence, lay out steps to detect hate speech and measures to ensure children don't access inappropriate material online.

"This is a multi-faceted problem that regulators around the world are grappling with," Ms Rowland said.

"We're not going to be a government who says that big tech is too big and we can't address this power imbalance in the interest of Australian consumers."

A review of online safety laws, to be headed up by former consumer watchdog deputy chair Delia Rickard, will begin public consultations in early 2024.

It will focus on the eSafety Commissioner's complaint system, how laws address harm online and gaps in laws.

Ms Rowland said while online safety laws provided protection from harassment, there were no provisions to address abuse on the basis of religion or background.

"There is deep concern across the community about the way hateful language spreads online," she said.

"Australia needs our legislative framework to be strong, but also flexible enough to respond to an ever-evolving space."

International Justice Mission Australia chief advocacy officer Grace Wong said tech companies needed to be held accountable.

"Big tech companies have refused to comply with Australia's basic online safety expectations to report on measures they take to protect children from online sexual abuse on their platforms," she said.

"It is essential the Online Safety Act is strengthened."